When I first started working in MarTech, I focused on signals.

Behavioural events.

Drop-offs.

Segmentation logic.

Data accuracy.

Over time, that focus expanded. I began to see how those signals feed into decision engines, how experimentation platforms turn insight into action, and how governance determines whether optimisation scales safely.

More recently, I have been building automation workflows using n8n to better understand how data and decision logic translate into real operational steps.

That experience changed how I think about personalisation.

Because the moment you move from analysis to orchestration, a new question emerges:

Not just “What does the data tell us?”

But “Who or what decides what happens next?”

And that question sits at the heart of Agentic AI in personalisation.

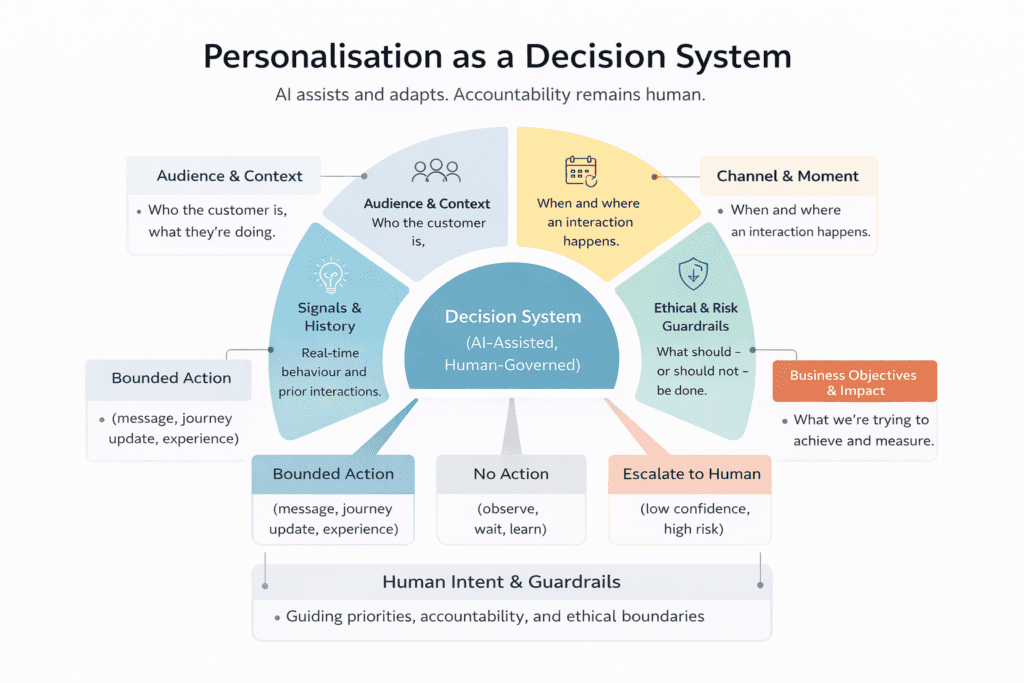

Rethinking Personalisation as a Decision System

In earlier reflections, I wrote about seeing platforms like Adobe Target as decisioning engines rather than simple experimentation tools.

The more I worked with orchestration workflows, the clearer that perspective became.

Personalisation today is not about launching isolated campaigns or testing page variants. It is about coordinating decisions across signals, journeys, and systems.

When I built rule-based workflows in n8n, even at a small scale, I could see how quickly complexity grows:

- A user input triggers a condition.

- That condition routes to different paths.

- Those paths must respect consent, context, and timing.

- Each branch introduces edge cases.

- Each edge case introduces risk.

Even in relatively simple automation, transparency and clearly defined decision boundaries matter.

At scale, across multiple journeys and channels, that complexity multiplies.

This is where the idea of Agentic AI begins to make practical sense.

Where Automation Ends and Agentic AI Begins

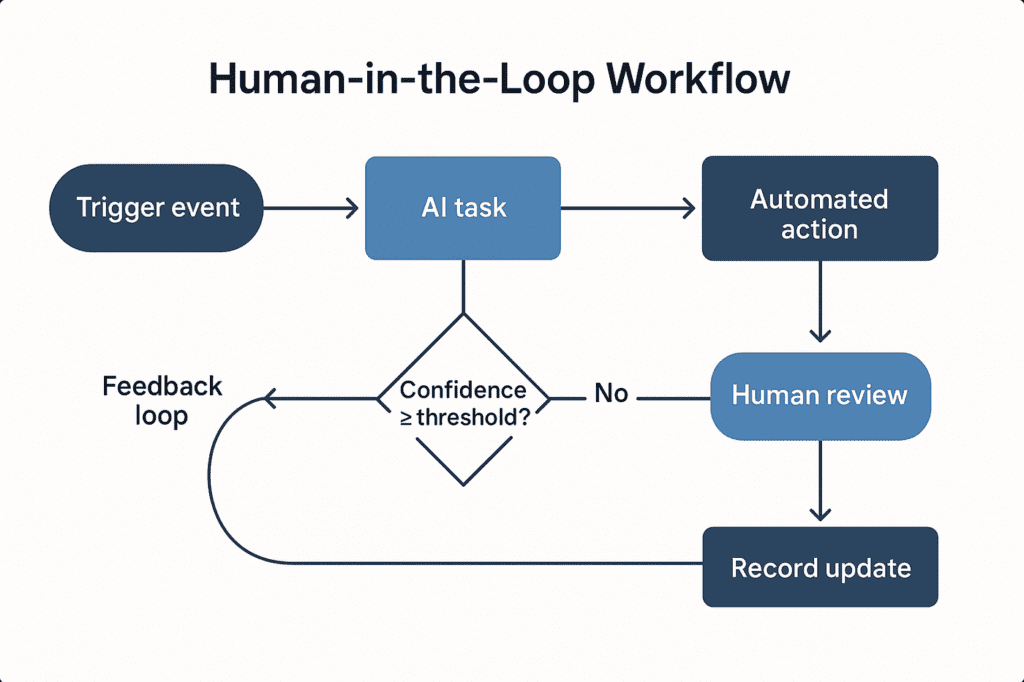

Automation executes predefined logic.

Copilots suggest next steps.

Agentic systems interpret signals, reason within defined constraints, take bounded action, and learn from outcomes.

But the key word is bounded.

Agentic AI in personalisation does not mean handing control to machines. It means structuring decision systems so that assistance can scale safely.

From a data science perspective, this distinction is critical.

Because once a system can act, not just recommend, governance is no longer optional. It becomes structural.

The Real Constraint: Decision Capacity

One insight that keeps resurfacing in my work is that the bottleneck in modern personalisation is not data volume or tooling capability.

It is decision capacity.

The surface area of personalisation continues to grow across signals, journeys, channels, content, and optimisation opportunities.

Human teams cannot manually evaluate every micro-moment.

Without support, optimisation becomes:

- Periodic instead of continuous

- Local instead of holistic

- Reactive instead of contextual

This is where agent-assisted personalisation adds value, not by replacing teams, but by expanding how many decisions humans can supervise effectively.

Where AI Assists

Through building workflows and contributing to structured thinking around agentic opportunities, I have started to see where AI assistance makes the most practical sense:

- Identifying emerging behavioural patterns automatically

- Surfacing underperforming journeys before they deteriorate

- Flagging conflicting experiences across touchpoints

- Monitoring signal degradation or tracking inconsistencies

- Prioritising optimisation opportunities based on impact

- Assembling context so interactions do not reset at each channel

None of these remove human judgement.

They support it.

Why Governance Must Be Designed In

As personalisation becomes more continuous and intelligence-driven, governance cannot sit outside the system.

It must be embedded within it.

That includes:

- Visible decision logic

- Human-in-the-loop checkpoints

- Auditability of actions

- Clear ownership of outcomes

- Defined override mechanisms

Agentic AI without governance is not innovation.

It is unmanaged risk.

Responsible personalisation depends not only on what systems can do, but on how intentionally they are designed.

Defining Human Accountability

As systems become more adaptive, the role of humans shifts.

Instead of executing every decision manually, humans increasingly define:

- Business priorities

- Brand standards

- Ethical thresholds

- Consent interpretation

- Risk tolerance

- Escalation and override rules

The more autonomy systems gain, the clearer human accountability must become.

Early exposure to behavioural analytics showed how a small tracking inconsistency can reshape optimisation priorities. Decision engines revealed how models amplify what they are fed. Orchestration workflows highlighted how quickly complexity compounds.

Taken together, these lessons reinforce a simple idea:

Intelligent systems require deliberate supervision.

From Campaigns to Coordinated Decision Systems

What excites me most about this shift is not the automation itself.

It is the move from fragmented optimisation to coordinated decision systems.

When signals flow across journeys, when context follows the customer, and when decisions are aligned to shared intent, experiences begin to feel connected rather than reactive.

Nothing “magical” happens.

Systems do not become autonomous masterminds.

They become structured assistants operating within boundaries defined by people.

And that is where agentic personalisation becomes both powerful and responsible.

The Shift I am Starting to See

Looking back at my earlier reflections, I learned that:

- data accuracy shapes decisions.

- decision engines learn from signals.

- orchestration connects insight to action.

Now I am learning that the real evolution is not automation.

It is coordination.

As AI capabilities accelerate, the need for clarity, accountability, and trust does not diminish. It becomes more important.

Personalisation may become more intelligent.

But it is still about people.

And the responsibility for those experiences remains human.

Ready to assess where you stand?

Book a 15-minute conversation to identify where your MarTech, data, and CX investments can unlock greater value.

About The Author

Elena Micu

Elena Micu is a Data Science Intern at Dexata with a strong interest in MarTech, customer experience, AI, and automation. Her work focuses on translating behavioural data into insight and contributing to scalable, trusted foundations for responsible personalisation and measurable customer impact.